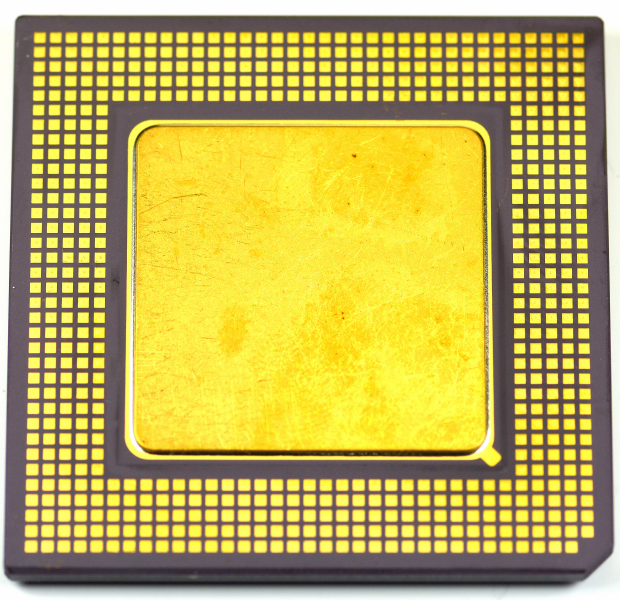

NEC D30700LRS-250 – R10000 T5-series microprocessor, successor to the R4400 series

Definition

The NEC D30700LRS-250 is one of the chips in the R10000 T5 series, a CPU microprocessor that succeeded the R4400 series, which (up to around 1995) was considered among the most powerful and widely deployed MIPS implementations in workstation/server environments. The D30700LRS-250 is therefore positioned as a high-end CPU intended for systems with memory and I/O subsystems designed for professional workloads.

This CPU was used in many Silicon Graphics platforms, including SGI Challenge, SGI Indigo2, SGI O2, SGI Origin, SGI Octane, SGI Octane2, and SGI Onyx, where the CPU + graphics + I/O combination was designed for rendering, 3D visualization, technical computing, and network services.

Fmily: “R10000 T5” and the transition from the R4400

The transition from the R4400 series to the R10000 T5 series should be understood as a generational change: it is not only about frequency and “speed grade”, but also about the evolution of the overall platform (cache, memory, controllers, and system balance). In these SGI contexts, the CPU is one element of a broader architecture: real-world performance depends on cache, memory width/latency, and the policies of the system controller.

Cache hierarchy: on-chip L1 and external L2

L1 cache: 32 + 32 KB

The D30700LRS-250 is described with a split L1 cache: 32 KB for instructions + 32 KB for data. Practically, this reduces conflicts between instruction fetches and data accesses, improving performance stability on mixed workloads (code + dataset).

L2 cache: 8 MB external

The L2 cache is specified as 8 MB external. This is an important design point: a large external L2 tends to reduce misses to main memory for “big” workloads (large datasets and complex scenes), but it imposes board/module constraints (layout, timing, dedicated components). In an SGI system, this often translates to better under-load predictability at the cost of higher hardware complexity.

Use in SGI workstations and servers: why this CPU fits there

The cited Silicon Graphics platforms are typically balanced to:

Reduce perceived latency on large datasets by leveraging a large L2.

Maintain strong overall throughput in graphics and I/O pipelines, where the CPU feeds complex subsystems (storage, networking, graphics).

Scale across configurations (from compact workstations to more server-class systems), where cache and memory often matter as much as the CPU core.

Sketch of the most important connections

system interconnect (memory + I/O)

┌──────────────────────────────────────────────────────┐

│ system controller / chipset (platform) │

│ RAM, I/O, arbitration, interrupts, timing handling │

└───────────────────────────┬──────────────────────────┘

│

▼

┌──────────────────────────┐

│ NEC D30700LRS-250 │

│ R10000 T5-series CPU │

│ 32+32 KB L1 cache │

└───────────┬──────────────┘

│

├────────► external L2 cache (up to 8 MB)

│

└────────► RAM + I/O (via system controller)

Table 1 – Identification data and specifications

| Characteristic | Indicative value |

|---|

| Device | NEC D30700LRS-250 |

| Series | R10000 T5 |

| Class | CPU microprocessor (MIPS, workstation/server class) |

| Historical positioning | Successor to the R4400 series |

| L1 cache | 32 KB instruction + 32 KB data |

| L2 cache | 8 MB external |

| Deployment platforms (examples) | Silicon Graphics: Challenge, Indigo2, O2, Origin, Octane, Octane2, Onyx |

Table 2 – Operational and design considerations

| Aspect | Practical meaning |

|---|

| R4400 → R10000 T5 transition | Platform evolution: not only clock, but cache hierarchy and system balance |

| Split 32+32 KB L1 | Less contention between instructions and data, more stable mixed-workload performance |

| 8 MB external L2 | Reduces main-memory misses on large datasets; requires more complex hardware design (timing and layout) |

| Platform dependence | Real performance depends strongly on the memory/I-O controller and overall configuration |

| Workstation/server target | Consistent with SGI systems for graphics, 3D visualization, technical computing, and I/O-intensive workloads |

![]() NEC D30700LRS-250

NEC D30700LRS-250